AI assistant traffic is not just direct traffic: how to measure ChatGPT, Perplexity, and Claude without fooling yourself

- 27 Apr, 2026

Over the last few months, more marketing teams have started asking the same question: “Are we getting AI traffic now?”

The question is fair. ChatGPT, Perplexity, Claude, and other interfaces now show links to websites more often. Some teams can already see those visits in their dashboards. Others notice direct traffic going up and jump to the conclusion that “AI tools are sending direct traffic.”

That reading mixes together several very different realities.

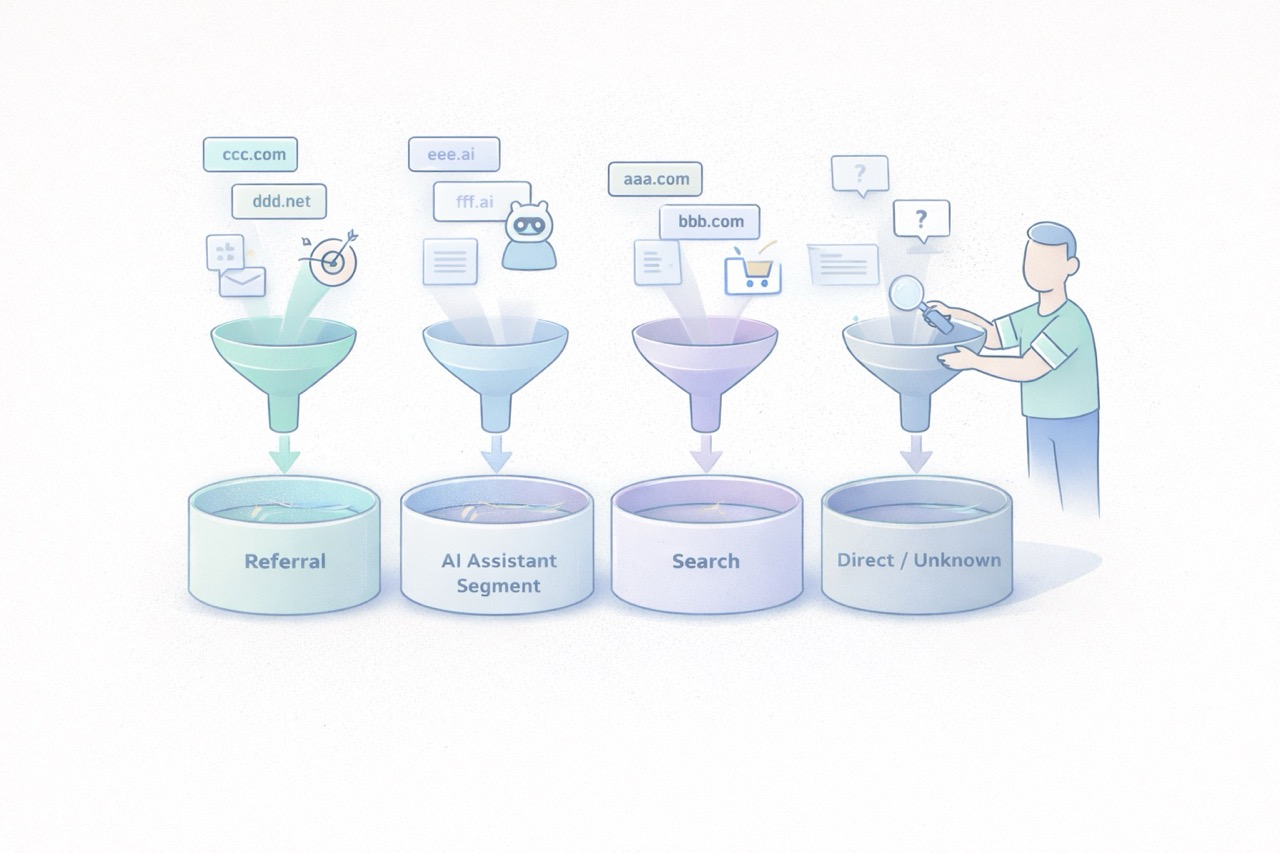

Some traffic from AI assistants is measurable as normal referral traffic. Some of it ends up in direct or unknown because no usable referrer is passed along. Another part never appears in your analytics at all because there was no click. And when Google blends AI experiences into Search, the line gets even blurrier.

In other words, AI traffic is neither a perfectly clean new channel nor a pure illusion. It is a mixed set of behaviors that needs to be read carefully.

The goal is not to measure everything perfectly. The goal is simpler and more useful: separate what is truly attributable, document the gray area, and avoid building a story on top of fragile numbers.

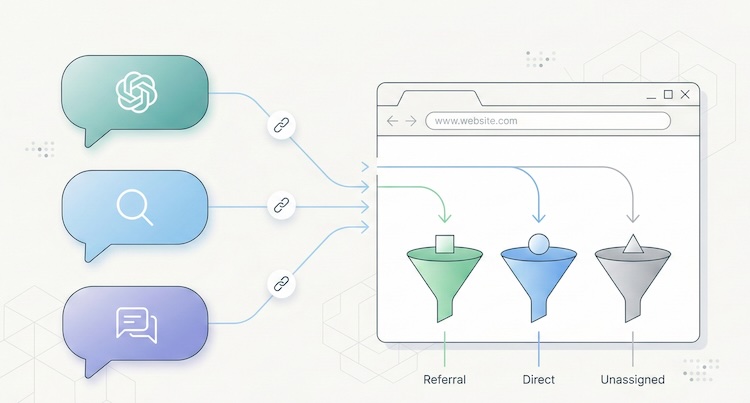

AI traffic is not one technical source

The first thing to clarify is simple: “AI traffic” is not a single analytics category.

In practice, teams usually mix together at least four different cases.

1. Assistants that send a real referrer

Some ChatGPT, Perplexity, or Claude experiences show links to web sources. When a user clicks from an interface that passes usable source information, your analytics tool may see a referring domain.

This is the easiest part to measure.

It behaves like regular referral traffic:

- a visit arrives with an identifiable source domain;

- a landing page is viewed;

- the visitor may convert, bounce, or continue browsing.

This case alone is enough to justify a dedicated segment. Plausible, for example, documented a strong increase in referral traffic from ChatGPT, Perplexity, Claude, and Phind in 2024. That is not a universal benchmark, but it is a useful signal: AI assistants can send visible, usable traffic.

2. Assistants or apps that do not pass clean source data

Not every click is passed through cleanly.

Fathom’s documentation explicitly notes that “Direct/unknown” traffic can come from direct visits, email, apps, or any situation where no referrer was passed, and that no analytics platform can control this.

This is where many teams get the story wrong.

A rise in direct traffic does not prove that AI assistants caused it. But the reverse is also true: some traffic from AI assistants may get absorbed into direct or unknown if the technical context does not pass usable source information.

3. AI answers that cite you without sending a click

This is a critical point.

Your content can be cited, summarized, or used as a source in an assistant answer without producing a visit to your site.

When that happens, your web analytics sees nothing.

You may have gained visibility. You did not gain a session. Treating those two things as the same will quickly distort your analysis.

4. AI experiences embedded inside traditional search

Google is a special case. Google presents AI Overviews and AI Mode as Search features that can show links to websites and that do not require separate SEO tactics beyond the usual fundamentals.

For measurement, that means something straightforward: anything driven by AI will not necessarily appear as a cleanly separated channel, especially when the experience remains embedded in an existing search environment.

So avoid overly binary logic such as:

- “standalone assistant = AI traffic”;

- “search engine = standard SEO traffic.”

In reality, the line is becoming more porous.

What you can actually measure today

The good news is that you can already measure several useful things without building an oversized setup.

Visible referring domains

This is the foundation.

If your analytics tool exposes referrers or sources, you can identify visits attributed to domains tied to AI assistants. Depending on your stack, that may happen through:

- a Referrers report;

- a Sources report;

- a custom segment;

- a dedicated channel group.

Google Analytics 4 even includes an explicit example of a custom channel group called “AI assistants,” with matching rules for assistants such as ChatGPT, Gemini, Copilot, Claude, and Perplexity.

That matters. It shows that in 2026, even Google Analytics treats this as a real analysis use case, not a niche curiosity.

Landing pages that capture those visits

Volume alone is rarely helpful.

The more useful question is: which pages attract this traffic?

If three articles, two product pages, and one comparison page capture most visits from AI assistants, you already have a practical reading:

- which assets are being cited or surfaced;

- which topics are emerging;

- which pages function as entry points;

- which pages deserve improvement.

That is often more useful than a single session count.

Conversions and intent signals

If your tool tracks goals or events, you can go further:

- demo requests;

- newsletter signups;

- meetings booked;

- trial starts;

- purchases;

- clicks on pricing or strategic CTAs.

At that point, you are no longer just measuring curiosity. You are measuring traffic quality.

That is where an “AI assistants” segment becomes valuable. Not because it sounds trendy, but because it lets you compare:

- volume;

- engagement rate;

- visit depth;

- conversion.

UTM campaigns when you control distribution yourself

There is also a simpler case: links that you distribute.

If you publish something in a newsletter, a document, a partnership page, or a directory, and you want to observe how your own distribution performs, UTM parameters remain useful.

But do not assume AI assistants will preserve your tracking conventions in every context. UTM tags are excellent for measuring links you intentionally distribute. They are much less reliable for mapping every citation or click generated by third-party AI systems.

What you will not measure cleanly

This is often the most important part of the conversation.

Good measurement also means accepting limits.

You will not see mentions without clicks

If an assistant summarizes your content, uses your ideas, or cites your page without sending a visit, your web analytics will remain silent.

That does not mean your content had no role. It just means web session data is not the right sensor for that kind of visibility.

You will not always separate AI traffic from direct traffic

When a visit arrives without a usable referrer, you enter a gray zone.

That gray zone may include:

- real direct traffic;

- email traffic;

- messaging apps;

- app traffic;

- browsers or contexts that strip source data;

- potentially some traffic originating from AI assistants.

The only serious posture is to treat that as uncertainty, not as a hidden truth waiting to be renamed.

You will not perfectly isolate AI-powered search experiences

When an AI experience remains embedded inside an existing search environment, isolated attribution becomes harder.

Google explains its AI features in Search as part of the broader web search experience, with the same core SEO fundamentals still applying. For marketing teams, the practical implication is simple: not every visit influenced by AI will appear as a distinct AI source in your reports.

You should not confuse citation, visit, and revenue

Being cited in an assistant, receiving a click, getting an engaged session, and generating a conversion are four different things.

A useful dashboard needs to keep those levels separate.

Otherwise, it becomes very easy to move from a modest observation, “we are seeing some visits from ChatGPT and Perplexity,” to a much bigger story, “AI is becoming our next major acquisition channel.”

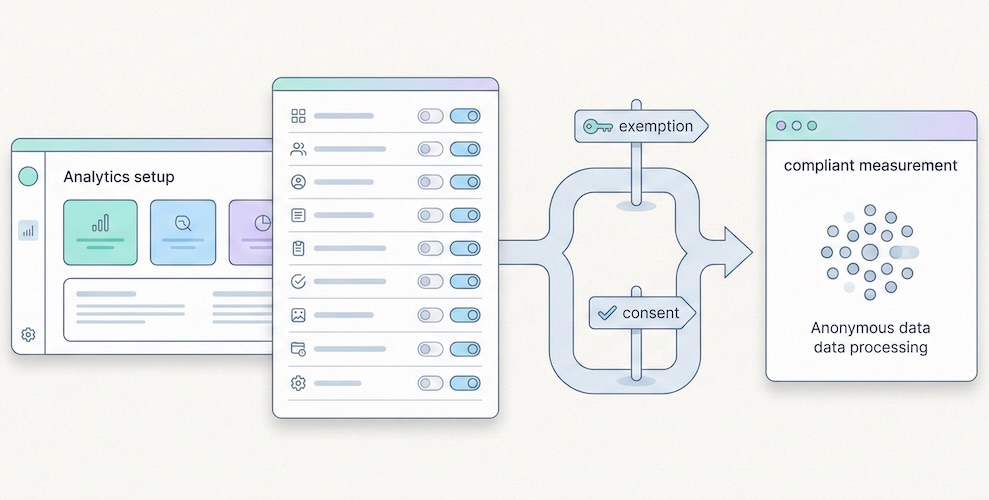

The cleanest way to measure this traffic

The goal is not to build a perfect system. The goal is to create a simple, stable, reusable reading framework.

1. Create a dedicated AI assistant segment or channel

Start with a short list of sources you can actually observe. For example:

- ChatGPT / OpenAI;

- Perplexity;

- Claude / Anthropic;

- optionally Copilot or Gemini if they are already visible in your data.

Be conservative. Do not add ten hypothetical domains that never show up.

2. Analyze landing pages first

Before commenting on volume, look at:

- which pages receive those visits;

- whether those pages are old or recent;

- whether they answer comparative, practical, or explanatory queries;

- whether they work well as entry pages.

This is often where the useful insight lives.

3. Compare traffic quality, not just traffic size

Low-volume but high-intent traffic can matter much more than a visible but shallow spike.

At a minimum, compare:

- whatever engagement metric your tool provides;

- visit depth;

- key conversions;

- CTA clicks;

- exit pages.

4. Keep an eye on direct or unknown as a separate gray zone

You should not merge direct traffic into AI traffic. But ignoring it completely would also be naive.

The better approach is to document it as a possible gray zone. If visible AI referrers increase and direct traffic rises on the same landing pages, that may support a hypothesis. It is still not proof.

5. Document your reading rules

This small step makes a big difference in teams.

Write down:

- which domains are included in the AI segment;

- what is not measured;

- what falls into direct or unknown;

- which conversions are tracked;

- how often the segment is reviewed.

A good dashboard is not enough on its own. You also need an interpretation rulebook.

The most common mistakes

Calling every direct traffic increase “AI traffic”

This is probably the most common mistake.

Direct traffic is an imperfect bucket. It can contain many things. Assigning it a single cause without evidence weakens the entire analysis.

Creating an overly broad AI channel from day one

If you group together any domain that vaguely sounds AI-related, you create a noisy segment. A narrower but cleaner segment is usually more useful than a wide and doubtful one.

Focusing on volume before conversion

Getting 500 weak visits from an AI interface matters less than getting 30 visits to a comparison page that converts.

Mixing classic SEO, AI assistants, and branded traffic without a method

The right move is not to force a false opposition. It is to separate what is observable, what is comparable, and what remains hypothetical.

What to remember

Traffic from AI assistants is real. It is not imaginary. But it also does not arrive as a single, clean, perfectly attributable source.

Some of it appears as visible referral traffic. Some of it gets lost in direct or unknown. Some of it never generates a session because no click happens. And some of it lives inside search environments where AI and classic search are harder to separate.

The best working approach comes down to four simple rules:

- isolate the referrers you can actually see;

- measure landing pages and conversions, not just sessions;

- treat direct as a gray zone, not as hidden certainty;

- clearly document what your AI segment includes, and what it does not.

That framework is less dramatic than a promise of total attribution. It is also much more useful.

FAQ

Should traffic from ChatGPT always be classified as direct traffic?

No. When a usable referrer is passed, it can be measured as referral traffic or grouped into a dedicated segment. But some visits may still end up as direct or unknown depending on the technical context.

Can I measure citations without clicks from AI assistants?

Not with standard web analytics. Without a session or a click, your audience analytics tool sees nothing.

Should I create a separate AI channel in GA4?

Yes, if you are starting to see referring domains tied to AI assistants. GA4 documentation explicitly includes this use case in its custom channel group guidance.

Should AI traffic be treated as a major new acquisition channel right away?

Not automatically. First look at landing pages, traffic quality, and conversions before making that leap.

Can I perfectly separate classic Google Search from Google’s AI experiences?

Not always. When AI is embedded inside a broader search experience, isolated attribution becomes harder.

Sources

- OpenAI Help Center, ChatGPT search : https://help.openai.com/en/articles/9237897-chatgpt-search

- Claude Help Center, Using Research on Claude : https://support.claude.com/en/articles/11088861-using-research-on-claude

- Perplexity Help Center, How does Perplexity work? : https://www.perplexity.ai/help-center/en/articles/10352895-how-does-perplexity-work

- Google Analytics Help, Custom channel groups : https://support.google.com/analytics/answer/13051316

- Google Search Central, AI features and your website : https://developers.google.com/search/docs/appearance/ai-features

- Fathom Analytics Docs, Dashboard explained : https://usefathom.com/docs/start/dashboard

- Plausible Analytics, Breaking down our AI traffic surge : https://plausible.io/blog/ai-referral-traffic-and-optimization