Blog: Privacy, GDPR & Audience Measurement

Guides and analysis on GDPR compliance, privacy-first analytics, SEO measurement, and audience tracking for modern websites.

- 02 Mar, 2026

Session Replay (Hotjar, Clarity): France's Privacy Watchdog Opens Pandora's Box

You might be using Hotjar, Microsoft Clarity, or Fullstory to understand how visitors navigate your website. These "session replay" tools show you their clicks, mouse movements, and hesitations. It's convenient for fixing bugs or improving user experience. The problem? You're probably recording far more than you think. And France's data protection authority just put the practice under the microscope. On February 25, 2026, the CNIL (Commission Nationale de l'Informatique et des Libertés) opened a public consultation on session replay tools. It's the first regulatory initiative of its kind in Europe. The consultation runs until April 22, 2026, with a final recommendation to follow. For website operators, agencies, and solution providers, the message is clear: the free-for-all is over. The numbers speak volumes. In 2025, the CNIL issued €487 million in fines, including 21 sanctions specifically targeting cookies and tracking technologies. Google paid €325 million, Shein €150 million. Session replay, far more intrusive than a simple analytics cookie, is now in the crosshairs. This consultation isn't theoretical: it's the prelude to enforcement actions and potential penalties. This article explains what session replay actually is, why it's riskier than standard analytics tools, what the CNIL's draft recommendation says, and how to achieve compliance before the final text becomes binding. Waiting for the final version to act means scrambling to fix everything under time pressure. What Session Replay Is and Why It's Different From Google Analytics The Difference Between Audience Measurement and Full Recording When you install Google Analytics, Matomo, or a privacy-first analytics tool, you collect aggregated metrics: visit counts, page views, bounce rates, traffic sources. You know 1,000 people visited your product page, but you don't see how each person navigated, pixel by pixel. Session replay is the opposite. It records a user's entire browsing journey, as if filming their screen. Mouse movements, clicks, scrolling, touch interactions on mobile, and sometimes even form inputs. This data is then replayed as a video. You see the user hesitate, go back, click three times on a button that doesn't work. This is extremely useful for identifying bugs invisible in standard statistics. A form that crashes on Safari iOS 14, a poorly positioned payment button, an incomprehensible error message: everything becomes visible. But this granularity has a price: you're collecting personal data at a level of detail far beyond what standard analytics tools permit. What These Tools Actually Record Most session replay solutions capture by default:Cursor movements and positions (or finger touches on mobile). All clicks and double-clicks. Page scrolling. "Rage clicks" (repeated clicks on the same spot, indicating frustration). Prolonged hovers over certain elements. Tab or window changes (sometimes). Form inputs, unless explicitly masked.This last point is critical. By default, some tools record what users type in form fields. Name, email, address, phone number, and even sensitive data like banking coordinates or health information if your site collects it. Most solutions offer automatic masking, but you need to activate it correctly. Result: you can end up with recordings showing a user filling out a medical form, correcting a typo in their credit card number, or deleting and rewriting a message in a "cancellation reason" field. See the problem? The Tools Involved The three market leaders are:Hotjar: The most popular solution for SMEs and agencies. Simple interface, integrated heatmaps, free up to 35 sessions/day. Microsoft Clarity: Completely free, easy integration with Azure and Google Tag Manager, widely adopted since 2023. Fullstory: Enterprise-focused, with automatic behavior analysis and AI-driven anomaly detection.But dozens of others exist: Lucky Orange, Smartlook, Mouseflow, SessionCam, Inspectlet, etc. The CNIL isn't targeting a specific solution -- it's regulating the entire category. What the CNIL Says in Its Draft Recommendation Acceptable Uses According to the Authority The CNIL doesn't say session replay should be banned. It sets a strict framework. According to the draft recommendation published on February 25, 2026, three uses are considered legitimate:Detection and understanding of technical errors: Identifying bugs, crashes, broken forms, elements not displaying properly on certain browsers or devices.User experience (UX) improvement: Spotting friction points, confusing paths, poorly placed elements. For example, discovering that 80% of users click a "Submit" button three times before understanding they first need to check a box.Customer support and assistance: Replaying a user's session when they encounter a problem to better understand their case and help resolve it.These three uses share a common trait: they're technical or support-oriented. They're not marketing uses. What's Excluded: Marketing and Retargeting The CNIL is crystal clear on this. Session replay must not be used for:Advertising retargeting (showing targeted ads to a user who hesitated on a product page). Advanced marketing segmentation (creating audiences based on fine-grained behavior). Aggressive commercial optimization (identifying "hesitant buyers" to send them promotions).Why this exclusion? Because these uses violate the data minimization principle. If your goal is to sell, you don't need to see every mouse movement. Aggregated statistics suffice. Session replay is disproportionate for these purposes. If you're using Hotjar or Clarity to "better understand your customers" from a conversion marketing angle, you're out of bounds. And during a CNIL audit, that won't go well. Mandatory Consent: No Exemption Possible The draft recommendation is unambiguous: session replay requires prior and explicit consent from users. It cannot benefit from the cookie consent exemption for audience measurement. Why? Because the exemption, governed by Article 5(3) of the ePrivacy Directive (implemented through national laws like France's Article 82 of the Data Protection Act), only covers trackers strictly necessary for service provision or exclusively dedicated to audience measurement in a very limited framework. Session replay fits neither category. It's a detailed behavioral analysis tool, not anonymized statistical measurement. Concretely, this means:You must display a consent banner (via a CMP, Consent Management Platform). Session replay must appear as a distinct choice in the banner, with a clear description. Users must be able to refuse without affecting site access. If users refuse or withdraw consent, recording must stop immediately and already-collected data must be deleted (or irreversibly anonymized).Minimization and Masking: Precise Technical Requirements The CNIL emphasizes the minimization principle under GDPR Article 5(1)(c). You must only collect what's strictly necessary for your objective. In practice, this requires:Automatic masking of all sensitive form fields: passwords, banking details, health data, social security numbers, etc. Default masking of input fields, unless you can justify that recording is indispensable (for example, to reproduce a bug that only occurs with specific input). Sampling: Recording only a percentage of sessions, not 100%. If you have 10,000 daily visits, recording all 10,000 sessions is disproportionate. Sampling 5% or 10% is more than sufficient to identify bugs. Short retention period: Sessions should be deleted as soon as the objective is achieved. A session recorded to fix a bug doesn't need to be kept for 12 months "just in case."The CNIL also recommends documenting your configurations. During an audit, you'll need to prove you activated masking, configured sampling, and limited retention periods. Responsibilities: Who Does What? Provider vs. Website Operator The CNIL recommendation distinguishes two actors:The solution provider (Hotjar, Microsoft, Fullstory, etc.): They design the tool, define default settings, offer (or don't offer) masking and minimization options. They can be considered data controllers for their own uses (improving their product, for example) or processors if they only host data on behalf of the website operator.The website or mobile app operator: That's you, if you install Hotjar on your site. You're the data controller for your use of session replay. You must obtain consent, configure masking, define retention periods.In some cases, the CNIL mentions joint controllership (GDPR Article 26): if the provider and operator pursue common purposes (for example, if Hotjar uses your data to improve its anomaly detection algorithm), they must sign a joint controller agreement. Web Agencies: Beware the Contractual Trap If you're a web agency installing Hotjar or Clarity for clients, the responsibility question gets complicated. Who must obtain consent? Who configures masking? Who gets sanctioned for non-compliance? By default, it's the client (the website operator) who remains responsible as the controller. But if you haven't informed them of obligations, haven't properly configured the tool, or haven't documented settings, you can be held liable. European data protection authorities have already sanctioned technical service providers for failing their processor obligations under GDPR Article 28. Our advice: Add a clause to your contracts now specifying:Who is responsible for session replay GDPR compliance. Who configures masking and sampling. Who updates the consent banner. Who maintains compliance documentation.And bill for compliance work. It's not included in a standard "Hotjar installation" package. Alternatives and Best Practices for Staying Compliant Option 1: Strictly Configure Session Replay If you want to continue using Hotjar, Clarity, or equivalent, here are the steps:Activate automatic masking of all form fields. Most tools offer a "strict" mode that masks everything by default.Reduce sampling to 5-10% of sessions. You don't need to record 100% of traffic to detect bugs.Limit retention to 30 days maximum. If you haven't fixed the bug in 30 days, it wasn't urgent.Update your CMP (OneTrust, Axeptio, Cookiebot, Didomi, etc.) to add a specific "Behavioral Analysis" or "Session Replay" option, distinct from "Audience Measurement."Document everything: Screenshots of settings, spreadsheet listing masked fields, purpose justification.Option 2: Replace with Heatmaps or Privacy-First Analytics Session replay is often used for needs that don't require full recording. Some alternatives:Heatmaps: They show where users click most, without recording individual paths. Much less intrusive. Event-based analytics: Configure specific events in Google Analytics, Matomo, or a privacy-first tool to measure clicks on certain buttons, form errors, cart abandonments. A/B testing: Test two versions of a page rather than trying to "understand" why the current version doesn't work.These approaches give you 80% of useful information with 10% of legal risk. Option 3: Session Replay Strictly on User Request An emerging practice is activating session replay only when users explicitly request it. For example:A user contacts support saying "I have a problem with the form." Support sends them a unique link that temporarily activates recording of their session, with explicit consent. The session is recorded, analyzed, then immediately deleted after problem resolution.This is the most compliant method, but requires slightly more complex technical infrastructure. What Happens After the Consultation Timeline and Next Steps The public consultation ends on April 22, 2026. Then the CNIL will:Analyze contributions received (professionals, trade associations, consumer groups, NGOs). Revise the draft recommendation if necessary. Adopt the final version, probably during the second half of 2026. Publish the recommendation on its website, with a transition period (typically 6 to 12 months).During the transition period, the CNIL won't sanction immediately, but expects gradual compliance. After this deadline, enforcement will begin. Risks of Non-Compliance If you continue using session replay without consent or with non-compliant configurations, you risk:A formal notice from the CNIL or other European DPA (first step, public or not). A financial penalty up to €20 million or 4% of global annual turnover (GDPR Article 83). Publication of the sanction, with reputational impact.In 2025, 67 out of 83 CNIL sanctions were issued via simplified procedure, with fines capped at €20,000 for "minor" violations. But for serious cases (massive collection, complete absence of consent, exposed sensitive data), amounts can be much higher. Shein took €150 million for cookies, and session replay is objectively more intrusive than a cookie. Domino Effect Across Europe France isn't alone. Other European authorities are watching closely. If the CNIL adopts a strict recommendation, it's likely that:The EDPB (European Data Protection Board) will use it as inspiration for an opinion or guidelines at the European level. German (DSB), Italian (Garante), Spanish (AEPD), or Irish (DPC) authorities will follow with their own texts.In other words, if you operate in Europe, complying with CNIL rules will be necessary anyway in the short term, even if you don't have French traffic. Conclusion: Act Now, Not in April 2027 The CNIL consultation on session replay is a warning signal, not a surprise. Tools that record complete user journeys have been in regulators' sights for years. What's changing in 2026 is that the CNIL is moving from awareness-raising to formal regulation. If you use Hotjar, Clarity, or any other session replay tool, you have two options. Either configure the tool strictly right now: masking, sampling, consent, documentation. Or consider less intrusive alternatives: heatmaps, privacy-first analytics, A/B testing. Inaction is no longer a viable strategy. SMEs and web agencies have until the end of 2026 to comply without immediate risk. But the longer you wait, the more costly and rushed compliance will be. And given the fine amounts issued in 2025 (€487 million total), the risk is no longer theoretical. For those seeking a simpler approach, there are audience measurement solutions that respect minimization and transparency principles by design. If this approach resonates with you, you can join Pomelo's waitlist to be informed of the launch. FAQ Can I continue using Hotjar or Clarity after the CNIL recommendation? Yes, provided you meet the requirements: obtain explicit consent via a CMP banner, activate masking of all sensitive fields, limit sampling (5-10% of sessions maximum), reduce retention to 30 days, and document all your configurations. If you meet these conditions, you can continue using these tools for technical purposes (bug detection, UX improvement, customer support). However, marketing uses (retargeting, advanced segmentation) are excluded. Is session replay covered by the consent exemption for audience measurement? No. The consent exemption under Article 5(3) of the ePrivacy Directive only applies to audience measurement tools strictly limited to aggregated and anonymous statistics. Session replay, which records detailed individual paths, cannot benefit from it. You must therefore obtain user consent before activating recording, even if your objective is purely technical. If I'm a web agency, who's responsible for compliance: me or my client? By default, the website operator (your client) is the data controller for data collected via session replay. But you, as an agency, are responsible as a processor for proper technical configuration of the tool under GDPR Article 28. If you install Hotjar without activating masking, configuring sampling, or adding a consent banner option, you can be held liable. It's essential to clarify this responsibility allocation in a written contract and bill GDPR compliance work as a separate service. What sanctions apply if I don't follow the CNIL recommendation? The CNIL recommendation doesn't have force of law, but it clarifies how to apply GDPR and ePrivacy rules. Not respecting it exposes you to a formal notice, then a financial sanction up to €20 million or 4% of global turnover under GDPR Article 83. In practice, for SMEs, fines via simplified procedure are capped at €20,000 for less serious violations. But for massive collection without consent or exposed sensitive data, amounts can be much higher, as illustrated by 2025 sanctions (Google €325M, Shein €150M). Are there less risky alternatives to session replay for improving UX? Yes, several alternatives provide UX insights without recording complete individual paths. Heatmaps show most-clicked areas without identifying users. Event-based analytics measure specific actions (button clicks, form errors) with tools like Google Analytics, Matomo, or privacy-first solutions. A/B testing compares two page versions to identify the best performer. User surveys (post-purchase or exit-intent) provide direct qualitative feedback. These approaches provide 80% of useful information with much lower legal risks. SourcesCNIL, "Session replay: the CNIL launches a public consultation on its draft recommendation", February 25, 2026 (https://www.cnil.fr/en/session-replay-cnil-launches-public-consultation-its-draft-recommendation) CNIL, "Sanctions and corrective measures: CNIL's actions in 2025", February 9, 2026 (https://www.cnil.fr/en/investigation-powers-cnil/sanctions-issued-cnil) CNIL, "Cookies and advertisements inserted between emails: GOOGLE fined 325 million euros by the CNIL", September 1, 2025 (https://www.cnil.fr/en/cookies-and-advertisements-inserted-between-emails-google-fined-325-million-euros-cnil) Nomos, "Session replay: the CNIL's draft recommendation", February 27, 2026 (https://www.nomosparis.com/en/session-replay-the-cnils-draft-recommendation/) PPC Land, "France's CNIL puts session replay tools under the privacy microscope", February 26, 2026 (https://ppc.land/frances-cnil-puts-session-replay-tools-under-the-privacy-microscope/) Solutions Numériques, "Rejeu de session : la CNIL ouvre une consultation publique pour encadrer ces outils de suivi", February 25, 2026 (https://www.solutions-numeriques.com/rejeu-de-session-la-cnil-ouvre-une-consultation-publique-pour-encadrer-ces-outils-de-suivi/) August Debouzy, "Cookies et autres traceurs, une action de régulation ciblée au niveau national", February 2026 (https://www.august-debouzy.com/fr/blog/2281-cookies-et-autres-traceurs-une-action-de-regulation-ciblee-au-niveau-national)

Read article →

- 28 Feb, 2026

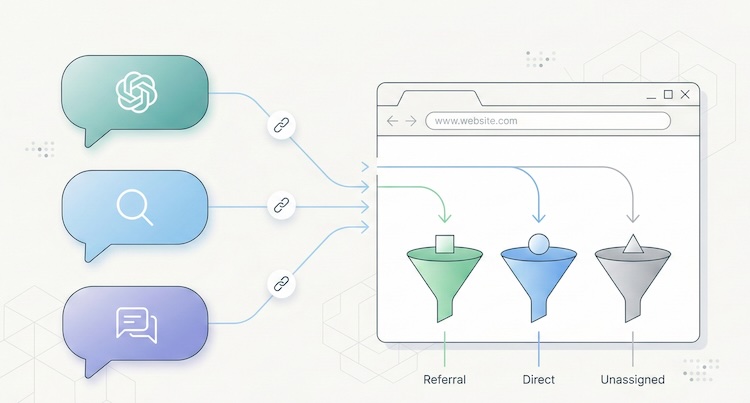

AI traffic: how to measure visits that ChatGPT, Perplexity and Claude send to your website

Something has shifted in the way people find your website. And chances are, you have no idea it's happening. Since late 2024, conversational AI platforms have moved beyond answering questions. They now cite sources, insert links, and send real visitors to real websites. ChatGPT, Perplexity, Claude, Gemini, Copilot: these tools are becoming a genuine discovery channel, one that rivals traditional search engines in the quality of traffic it delivers. The catch? Most analytics tools don't separate this traffic. It gets lumped into "referral," blends into "direct," or vanishes from reports entirely. You may already have visitors arriving through a ChatGPT recommendation, and your dashboard won't show it. This article gives you the full playbook: how to spot AI traffic, why it matters, and what to do about it. A new discovery channel, growing fast The raw numbers are still modest. But the trajectory is hard to ignore. A study by SE Ranking covering nearly 64,000 websites across 250 countries (January-April 2025) found that ChatGPT alone accounts for 78% of all AI referral traffic worldwide. Perplexity comes in at roughly 15%, Gemini at 6.4%. Claude and DeepSeek share the remainder at under 1% each, though both show compelling growth curves. (Source: SE Ranking, "AI Traffic in 2025") A separate analysis by Conductor, reported by Search Engine Land, confirms this hierarchy across 13,770 domains and 3.3 billion sessions: AI traffic averages about 1% of total site visits, with ChatGPT generating 87% of it. (Source: Search Engine Land, Nov. 2025) One percent sounds negligible. Two things make it anything but. The growth rate is staggering. Between January and April 2025, ChatGPT's share of global internet traffic doubled, from 0.08% to 0.16%. Year-over-year growth in AI referral traffic exceeds 500% in some segments. And Gartner projects a 25% decline in traditional search volume by late 2026, driven by conversational AI agents. The traffic quality is exceptional. Visitors arriving from AI platforms spend an average of 9 to 10 minutes per session on the sites they visit, compared to 3 to 4 minutes for organic search. SE Ranking found that Claude-referred sessions in the EU average a remarkable 67 minutes, suggesting extraordinarily deep engagement. AI referral traffic converts at roughly 1.5x the rate of social traffic, according to aggregated industry data. The logic is straightforward: a user who clicks a link inside an AI response has already asked a specific question, received context, and chosen to visit your site from among the cited sources. Their intent is pre-qualified. They know why they're coming. Why your analytics can't see it If AI traffic is this valuable, why doesn't it show up clearly in your reports? Three technical issues create this blind spot. The missing referrer problem When someone clicks a link in Perplexity from a web browser, the HTTP Referer header typically passes perplexity.ai as the source. Your analytics tool can then classify the visit as a referral from Perplexity. But this mechanism frequently fails. According to SparkToro, 60% of AI-driven sessions lack a usable referrer header. The reasons are varied: mobile apps (ChatGPT on iOS, Copilot in Windows) open links in internal webviews that don't pass referrer data. Some AI agents prefetch or preview pages without triggering the analytics script. And ChatGPT's built-in Atlas browser, launched in late 2025, behaves differently from standard browsers altogether. (Source: MarTech, Nov. 2025) The result: a significant portion of AI traffic falls into the "direct" or "unassigned" bucket in your analytics, invisible and unattributed. GA4's default classification Google Analytics 4 treats AI traffic as "referral," the same category as a link from Facebook, a forum, or a directory listing. There is no dedicated "AI" channel in GA4's default setup. Google has signaled plans to add one, but implementation hasn't materialized yet. In practice, if you open your acquisition report in GA4 without custom configuration, ChatGPT traffic is buried among dozens of other referral sources. For a site receiving hundreds of different referrers, spotting chatgpt.com or perplexity.ai requires knowing what to look for. The bot-vs-human confusion AI platforms interact with your site in two fundamentally different ways. The first is referral traffic: a human clicks a link in an AI response and lands on your page. This is real traffic with a real visitor. The second is crawling: AI platform bots (GPTBot for OpenAI, PerplexityBot, ClaudeBot, and others) visit your site to index content and feed their models. This crawl traffic is not useful audience data. It's data harvesting. GA4 automatically filters known bots, but the list isn't comprehensive. Some newer AI bots slip through, while some legitimate human visitors from AI tools get incorrectly filtered. Cloudflare has observed crawl-to-referral ratios as high as 700:1 for Perplexity, which gives a sense of how much harvesting activity exists relative to actual human visits. (Source: Digiday, Dec. 2025) How to identify AI traffic in your tools Two approaches work, depending on what you're using. In GA4: create a dedicated "AI Traffic" channel The recommended method is to build a custom channel group that aggregates all known AI sources. Here's the process:In GA4, go to Admin > Data Settings > Channel Groups. Click the default channel group, then "Copy" to create a new one. Add a channel called "AI Traffic." Set the rule: Match type = "matches regex", then paste this pattern:(chatgpt\.com|chat\.openai\.com|perplexity\.ai|claude\.ai|gemini\.google\.com|copilot\.microsoft\.com|deepseek\.com|meta\.ai)Drag your "AI Traffic" channel above the default "Referral" channel in the priority order. This is critical: GA4 evaluates rules top-down, and if "AI Traffic" sits below "Referral," visits will be classified as referral before reaching your rule.This setup only applies to new data (no retroactive effect). Allow a few days before results appear. For a one-time analysis of historical data, create an Explore report with a filter on "Session source" using the same regex. (Source: MarTech, Nov. 2025) In a lightweight analytics tool (Plausible, Fathom, etc.) This is where a well-designed simple tool outperforms a complex one. In Plausible, the "Sources" report displays every identified referrer directly. If chatgpt.com or perplexity.ai appears as a source, you see it instantly, without setup, without regex, without channel groups. Click the source to filter the entire dashboard by that origin and analyze entry pages, time on site, and triggered events. Plausible documented its own experience: in 2024, the Plausible blog saw a 2,200% surge in AI referral traffic within months, all identifiable from their standard dashboard with zero configuration. (Source: Plausible, Dec. 2024) This is a textbook case where the frugal analytics philosophy pays off: when a tool is designed to surface essential data without layers of configuration, emerging signals become visible immediately. A tool like GA4, which requires technical expertise for every new traffic source, creates a systematic delay in trend detection. For a detailed breakdown of how these tools compare, see our Plausible vs Fathom vs Simple Analytics comparison. AI referral traffic vs AI crawling: two different things A common mistake is conflating referral traffic (humans clicking) with crawling (bots scraping). They deserve separate attention because they raise different questions. AI referral traffic is an opportunity. It represents a qualified, pre-informed visitor arriving with intent. Measuring it lets you optimize landing pages, adapt content, and understand how AI platforms perceive your site. AI crawling is a governance question. Bots like GPTBot, PerplexityBot, and ClaudeBot visit your site to train their models or answer user queries in real time. Some do so aggressively: Cloudflare found that GoogleBot's crawl volume (which also feeds Gemini) dwarfs that of all other AI bots combined. You can control crawling through your robots.txt file: User-agent: GPTBot Disallow: /User-agent: PerplexityBot Disallow: /User-agent: ClaudeBot Disallow: /But beware the paradox: blocking the crawl can reduce your referral traffic. If an AI can't index your content, it can't recommend it to users. This is a trade-off to make deliberately. An emerging approach uses an llms.txt file (a Markdown file placed at your site's root) to guide AI platforms toward the content you want to make accessible, without blocking all crawling. Anthropic (the company behind Claude) uses this mechanism on its own site. How to get cited by AI platforms Understanding AI traffic also means understanding what triggers it. AI platforms don't cite sites randomly. Several factors drive citations. Content structure matters. Pages with clear heading hierarchies (H2, H3, lists) that directly answer specific questions are roughly 40% more likely to be cited, according to analysis from Superprompt. Structured FAQ sections are particularly effective: they match the question-and-answer format of AI interactions exactly. Freshness is a strong signal. Unlike Google, which tends to favor established, older content, AI platforms prefer recently updated material. Pages updated within the last 30 days receive roughly 3x more AI citations on average. Original data attracts citations. Data tables, proprietary statistics, and exclusive benchmarks get cited about 4x more than generic content. This is another argument for precise, data-driven KPIs over vanity metrics. Traditional SEO remains the foundation. Multiple converging studies suggest that 77% of AI optimization stems directly from strong conventional SEO. Sites ranking in Google's top 10 are significantly more likely to be cited by LLMs. SEO doesn't depend on Google Analytics, but it remains the bedrock on which AI visibility is built. What this means for choosing an analytics tool AI traffic exposes a structural weakness in complex analytics platforms: their inability to surface an emerging signal without prior configuration. With GA4, you need to create a channel group, write a regex, update it regularly (new AI tools launch every month), and accept that the data won't be retroactive. It's doable, but it demands technical expertise that most small business owners and freelancers simply don't have. With a well-designed lightweight analytics tool, AI referrers appear naturally in the sources report, right alongside Google, LinkedIn, or Twitter. No setup, no regex, no blind spots. That's the core principle of analytical sobriety: collect less data, but make every data point immediately readable. AI traffic is not a passing phenomenon. It's the early stage of a structural shift in how people discover content online. The sites measuring it today will have a competitive edge tomorrow, not because the volume is enormous, but because the quality of this traffic makes it a powerful conversion lever. The question is no longer whether AI platforms send traffic to your site. It's whether your measurement tool shows it to you.Frequently asked questions What percentage of my traffic comes from AI? On average, about 1% of a site's total traffic originates from AI platforms (ChatGPT, Perplexity, Gemini, etc.), according to Conductor's November 2025 study of 13,770 domains. This figure can be higher for low-traffic sites or niche B2B properties. Critically, that 1% only reflects identifiable traffic: SparkToro found that 60% of AI sessions lack a referrer header and fall into "direct," meaning actual AI traffic is likely 2 to 3 times higher than what your dashboard shows. How do I see ChatGPT traffic in Google Analytics 4? GA4 doesn't yet have a native "AI" channel. You need to create a custom channel group: go to Admin > Data Settings > Channel Groups, add an "AI Traffic" channel with a regex rule covering AI domains (chatgpt.com, perplexity.ai, claude.ai, gemini.google.com, copilot.microsoft.com). Place it above the "Referral" channel in the hierarchy. Data will only be collected from the date you create the channel. Should I block AI bots with robots.txt? It's a trade-off. Blocking AI bots (GPTBot, PerplexityBot, ClaudeBot) via robots.txt prevents your content from being indexed by these platforms, which may reduce citations and referral traffic. On the other hand, not blocking means your content feeds AI model training, raising intellectual property and consent questions. A middle-ground approach uses an llms.txt file to guide AI platforms toward the content you want them to access. Can cookieless analytics detect AI traffic? Yes, and often better than GA4. Cookieless tools like Plausible, Fathom, or Simple Analytics display AI referrers directly in their sources report, with no configuration needed. The absence of setup layers (channel groups, regex, filters) means new traffic sources are visible immediately. It's a structural advantage of the frugal approach for detecting emerging signals. How do I optimize my content to get cited by ChatGPT or Perplexity? Five key levers: structure content with clear headings (H2/H3) and FAQ sections; keep content fresh (AI platforms favor recency); produce original data (tables, statistics, benchmarks); maintain strong traditional SEO (sites ranking well in Google are cited more by AI); and consider an llms.txt file to make your structured content easily accessible to AI crawlers. Pages that directly answer specific questions in a clear format consistently earn the most citations.Data verified February 2026. AI traffic market shares are evolving rapidly. This article will be updated every six months.

- 07 Feb, 2026

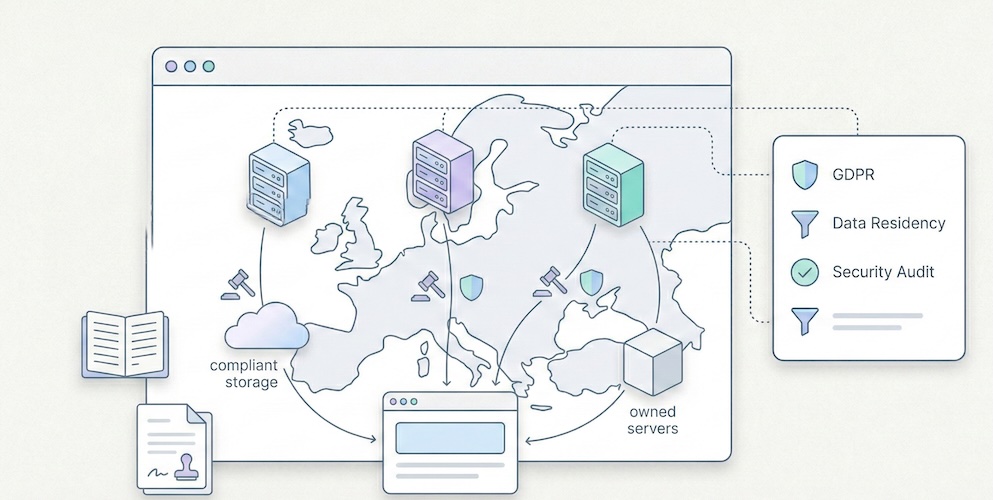

Plausible vs Fathom vs Simple Analytics: the 2026 privacy-first analytics comparison

You have decided to leave Google Analytics behind. You understand that "free" comes at a real cost, that GA4's complexity exceeds your actual needs, and that GDPR compliance deserves more than a poorly configured cookie banner. Good. You are part of a fast-growing movement. Now comes the hard part: among the privacy-first alternatives, which one actually fits your situation? Three names keep coming up: Plausible, Fathom and Simple Analytics. They are the most cited, most mature and most credible options in the "frugal analytics" segment. But their differences, often invisible in marketing copy, have very real consequences on your bill, your compliance posture and your daily workflow. This comparison does not aim to crown a universal winner. It provides the factual elements you need to make an informed choice. We verified pricing on official pages, documented actual features, and added two outsiders often overlooked in these discussions: Pirsch and Umami. What these three solutions share Before diving into differences, let us establish common ground. Plausible, Fathom and Simple Analytics share a foundation that radically separates them from Google Analytics: None of them use cookies by default. They do not build advertising profiles. Their scripts weigh less than 5 KB (compared to roughly 45 KB for GA4, according to HTTP Archive measurements). They display all essential metrics on a single page, with no nested menus and no training required. On the legal front, all three claim GDPR compliance without a cookie banner. In practice, the strength of that claim varies, and that is one of the points we will detail below. Finally, all three are independent companies with no major venture capital, funded by their subscriptions. That is a strong signal of long-term sustainability. Real pricing, compared side by side The entry price does not tell the full story. What matters is the cost at comparable volume. Here are the rates verified on each solution's official page as of February 2026. Monthly pricing grid (USD, monthly billing)Monthly volume Plausible (Starter) Fathom Simple Analytics (Simple)10,000 pageviews $9 $15 $15100,000 pageviews $9 (same tier) $15 ~$19200,000 pageviews $14 (Growth) $25 ~$29500,000 pageviews ~$19 (Business) $45 ~$491,000,000 pageviews Custom $60 CustomSources: plausible.io/pricing, usefathom.com/pricing, simpleanalytics.com/pricing. Rates verified February 2026. Key takeaways: Plausible is the cheapest option at low volume ($9/month for 10k pageviews). But pricing rises quickly: the Growth plan at $14 and the Business plan at $19 unlock additional features (more sites, team access, funnels). Fathom offers a single feature set across all tiers, with pricing based solely on pageview volume, starting at $15/month. No free plan. No discounts. Their stated philosophy: the same price for everyone, no promotions ever. Simple Analytics offers a free plan (limited to 30 days of history) and a Simple plan at $15/month. The Team plan ($40/month) adds collaboration and API access. Their billing adjusts automatically based on the three-month rolling average of your traffic. Two outsiders worth knowing Pirsch (based in Germany) offers one of the lowest entry prices on the market: $6/month for 10,000 pageviews, $10/month for 100,000 pageviews. It includes white-labelling and up to 50 domains. Source: pirsch.io/pricing. Umami is open source and fully self-hostable at no cost. It is the only solution in this comparison with zero licensing fees, provided you manage hosting yourself. For those who prefer a managed service, Umami Cloud starts at $9/month. Source: umami.is. Data hosting and location This is the critical point for GDPR compliance. The question is not just "where are the servers?" but "who operates the infrastructure and under which jurisdiction?"Solution Data location Infrastructure Legal entityPlausible European Union (Hetzner, Germany) Owned by European companies Plausible Insights OÜ (Estonia)Fathom Servers in Germany (via AWS EU) Amazon Web Services Conva Ventures Inc. (Canada)Simple Analytics Netherlands European-owned servers Simple Analytics B.V. (Netherlands)Pirsch Germany German servers Emvi Software GmbH (Germany)Umami (Cloud) Variable by plan Vercel/Cloud Umami Software Inc. (USA)Plausible emphasises that its entire infrastructure is operated by European companies. As of early 2026, they report over 16,000 paying subscribers, including 600+ enterprise accounts. Source: plausible.io/enterprise-web-analytics. Fathom uses AWS in the EU region (Frankfurt), but the legal entity is Canadian. Canada benefits from an adequacy decision by the European Commission, which simplifies data transfers. However, for organisations with strict data sovereignty requirements, this is not equivalent to a fully European entity. Simple Analytics is the most explicit about data location: data exclusively in the Netherlands, proprietary servers, no US-based subprocessors. This is the strongest argument for organisations subject to strict sovereignty policies. Pirsch, based and hosted in Germany, offers a comparable alternative in terms of European data localisation. The privacy question All three solutions call themselves "privacy-first". But the technical details matter. Plausible uses a hash of the visitor's IP address combined with the User-Agent and a daily salt to identify unique visitors. The raw IP address is never stored. The hash is renewed daily, which prevents long-term tracking. This is a form of pseudonymisation. Fathom uses a similar hashing approach but adds a routing layer through what they call "unique signatures". Like Plausible, the raw IP is not retained. Simple Analytics stands apart by claiming to collect no personal data whatsoever, including in hashed form. No IP hash, no User-Agent recorded. Their unique visitor counting relies on a different mechanism based on referrers and URLs. This is the most radical approach to data minimisation. This difference has a direct consequence: Simple Analytics can legitimately claim not to process personal data within the meaning of the GDPR, which strengthens the case for consent exemption. For Plausible and Fathom, the question is more nuanced: a hashed IP, even if non-reversible, could be considered pseudonymised data. In practice, data protection authorities (including the CNIL in France and the ICO in the UK) tend to accept these approaches if they meet exemption criteria (no cross-referencing, limited retention, strictly statistical purpose). For more on consent exemption conditions, see our dedicated article: Audience measurement, GDPR and cookie banner exemption. Features: what each one does (and does not do) All these solutions have chosen simplicity. But "simple" does not mean identical. Here are the differences that matter in daily use. Feature comparison tableFeature Plausible Fathom Simple AnalyticsSingle-page dashboard Yes Yes YesCustom events Yes Yes YesGoals / Conversions Yes (advanced funnels) Yes YesMulti-step funnels Yes (Business plan) No NoGoogle Search Console integration Yes No NoE-commerce tracking (revenue) Yes (Business plan) Yes NoGA4 data import Yes Yes NoExport API Yes Yes Yes (Team plan)Email reports Yes Yes YesDashboard sharing Yes (public/private link) Yes (shareable link) YesMulti-site 1 (Starter) / 3+ (Growth) 50 included 5 (Free) / 10+ (Simple)Team members 1 (Starter) / 3 (Growth) 1 (base plan) 1 (Simple) / 2+ (Team)Data retention 3-5 years by plan Unlimited 30 days (Free) / 3-5 yearsOpen source Yes (Community Edition) No NoSelf-hosting Yes (CE, reduced features) No NoWhite-label No (except Enterprise) No NoKey highlights: Plausible is the most feature-rich of the three. The Google Search Console integration is a significant advantage for SEO: it lets you see search queries directly in the analytics dashboard, without switching tools. Multi-step funnels (Business plan) bring it closer to more advanced tools. And being open source reassures organisations that want to audit the code. Fathom stands out with its unlimited data retention policy and the inclusion of 50 sites from the base plan. For a freelancer or agency managing many low-traffic sites, this is a real economic advantage. Their infrastructure is built for scale: they claim to handle sites with one billion pageviews per month. Simple Analytics bets everything on simplicity and absolute privacy. Their "Mini Websites" feature lets you see the exact pages that referred your site (for example, a specific tweet), which other solutions do not offer. Their built-in AI tool lets you query your analytics in natural language. Script weight and performance impact For a website, every kilobyte of JavaScript affects loading time and Core Web Vitals. This is a criterion that should not be overlooked, especially if SEO is a priority.Solution Script weight Estimated impactPlausible < 1 KB NegligibleFathom ~2 KB NegligibleSimple Analytics ~6 KB Very lowPirsch < 1 KB (or server-side) Negligible to zeroGoogle Analytics (GA4) ~45 KB Measurable (LCP, FID)All solutions in this comparison have a negligible performance impact, especially compared to GA4. The advantage goes to Plausible and Pirsch, whose scripts are lightest. Pirsch also offers server-side integration (via API or SDK), which eliminates client-side JavaScript entirely. To understand in detail why analytics script weight matters for SEO, see our article: Myth: you need Google Analytics for SEO. Which tool for which profile? Rather than declaring a winner, here is a decision guide by real-world situation. You are an indie developer or maker with a SaaS You manage one or two projects, traffic is moderate (< 100k pageviews/month), and you want a tool that installs in 30 seconds. Best pick: Plausible (Starter at $9/month) for the best value at the first tier, open source, and Search Console integration. Alternative: Pirsch ($6/month) if budget is very tight, or Umami (free) if you are comfortable with self-hosting. You are a freelancer or agency managing 10-30 client sites Volume per site is low, but the number of sites is high. You need separate dashboards and simple reporting. Best pick: Fathom ($15/month, 50 sites included). No competitor includes as many sites in the base plan. Unlimited data retention means you never lose client history. Alternative: Pirsch, which also offers 50 domains from the first plan. You are an SME with strict compliance obligations (DPO, processing register) The question is not price but demonstrating compliance to your DPO or supervisory authority. Best pick: Simple Analytics, for the "zero personal data" argument. This is the easiest position to defend in a data processing register. Alternative: Plausible, whose 100% European hosting on European-owned infrastructure (not AWS) strengthens the sovereignty case. You are an organisation that needs funnels, e-commerce tracking or advanced analysis You have outgrown a minimalist dashboard. You need multi-step conversion tracking. Best pick: Plausible (Business plan). It is the only solution in this comparison that offers advanced funnels and e-commerce revenue tracking while staying within the privacy-first paradigm. For a broader view including GA4 and Matomo, see our general comparison: Google Analytics, Matomo and frugal analytics: a 2026 guide to choosing. Total cost: beyond the sticker price The monthly fee is only part of the equation. Here are the hidden costs (or avoided costs) to factor into your calculation. Costs avoided compared to GA4: no training required (GA4 often requires days of training), no consultant for configuration, no Consent Management Platform to maintain if you qualify for the consent exemption, no legal risk from data transfers to the United States. Migration cost: Plausible and Fathom let you import Google Analytics history. Simple Analytics does not. If historical continuity matters to you, this is a consideration. Self-hosting cost (Plausible CE, Umami): free in licensing, but factor in maintenance time, updates, and server cost (roughly $5 to $20/month for a VPS depending on volume). And Plausible Community Edition does not include all cloud features (funnels, e-commerce, Sites API). To go deeper on the real cost of analytics, our article on data obesity explains the economic consequences of over-collection: Data obesity: why your SME does not need Big Data. Final summary tableCriterion Plausible Fathom Simple Analytics PirschEntry price $9/month $15/month Free (limited) $6/monthEntry volume 10k pvs 100k pvs Unlimited (Free) 10k pvsSites included 1-10+ 50 5-20+ 50Data location EU (Hetzner) EU (AWS Frankfurt) Netherlands GermanyLegal entity Estonia (EU) Canada Netherlands (EU) Germany (EU)IP hash Yes (daily) Yes No YesOpen source Yes (CE) No No Yes (partial)Retention 3-5 years Unlimited 30d - 5 years UnspecifiedGA4 import Yes Yes No YesFunnels Yes (Business) No No Yes (basic)GSC integration Yes No No YesScript < 1 KB ~2 KB ~6 KB < 1 KBFAQ Plausible, Fathom or Simple Analytics: which is cheapest? It depends on volume. For under 10,000 pageviews per month, Pirsch is cheapest ($6/month). Among the three main solutions, Plausible is most affordable at low volume ($9/month for 10k pvs). At 100,000 pageviews, Plausible and Fathom converge around $15/month. Beyond that, Plausible generally remains cheaper, but its features are spread across multiple plans (Starter, Growth, Business). Is Plausible truly GDPR compliant without a cookie banner? Plausible is designed to work without cookies. Their identification method uses a daily-rotated IP hash, with no raw address stored. Under the criteria set by the CNIL for consent exemption (and similar guidance from the ICO and other European DPAs), this approach is accepted when strictly limited to audience measurement with no cross-referencing with other processing. However, the "personal data" status of an IP hash is subject to ongoing legal debate. The prudent approach is to consult your DPO and document your analysis in your processing register. Is Fathom a good fit for agencies managing many client sites? Yes, this is one of its strongest points. Fathom includes up to 50 sites in every plan, with separate dashboards. Unlimited data retention and automated email reports make it well suited for multi-client management. However, Fathom does not offer white-labelling or per-user permission management on the standard plan. What is the difference between Plausible Cloud and Plausible Community Edition? Plausible Cloud is the hosted, managed service run by the Plausible team (from $9/month). Plausible Community Edition (CE) is the open-source version, self-hostable for free. But CE does not include all cloud features: marketing funnels, e-commerce revenue tracking and the Sites API are excluded. CE is suited for developers who want basic analytics on their own server. Are there solutions even cheaper than these three? Yes. Umami is entirely free to self-host (open source, MIT licence). Pirsch starts at $6/month. And for very small sites, Simple Analytics offers a free plan with 30 days of retention. Beyond these options, it is also worth considering that "cheapest" is not always most economical: ease of installation, infrastructure reliability and company sustainability have real value. A tool that disappears or locks your dashboard when you exceed your quota costs more than a slightly higher subscription.Last updated: February 2026. Pricing and features verified on official solution websites. This article will be updated at minimum every six months.

The Persistent Myth: Do You Actually Need Google Analytics for SEO?

It's the first question we hear whenever we mention alternatives to Google Analytics: "But if I remove the Google script from my site, will Google punish me? Will I lose my rankings?" The short answer is: No. The longer answer is that you're probably confusing two tools with very different roles — and that, paradoxically, removing Google Analytics could actually improve your rankings. Here's why, with sources.1. The Fundamental Confusion: Analytics ≠ Search Console There are two major tools in Google's ecosystem for website owners. Many people confuse them, which keeps the myth alive. Google Search Console (GSC): Your Line to Google This is the tool for communicating with the search engine. It tells you:How Google sees your site (indexing status, crawl errors). Which keywords you rank for. How many clicks and impressions you get. Whether your site has technical issues (mobile, Core Web Vitals).This one is essential for SEO. It's free, lightweight (no script to install on your site), and it's the only reliable source of data on your actual search rankings. Good news: Search Console works independently of Google Analytics. You can use one without the other. Google Analytics (GA4): An On-Site Behavior Observer GA4 watches what visitors do after they arrive on your site. It doesn't help improve your search rankings. Its role is to measure engagement, journeys, and conversions — useful marketing information that has no impact on the search algorithm.2. Google Has Confirmed It: Analytics Is Not a Ranking Factor This isn't speculation. Google has said it explicitly, multiple times. John Mueller, Search Advocate at Google (one of the official spokespeople for the Search team), has clearly stated that using Google Analytics is not a ranking factor. He has addressed this question on Twitter/X and during Google Search Central sessions, confirming that Google Search and Google Analytics are separate products and that one doesn't influence the other. → Source: Google Search Central – How Search ranking works Gary Illyes, also a Google Search analyst, reinforced this point by explaining that the search engine doesn't use Google Analytics data for ranking, notably because not all sites install it and doing so would create an unfair bias. In other words: removing the Google Analytics script from your site sends no "negative signal" to Google. The search engine doesn't know (and doesn't care) which analytics tool you use.3. The Paradox: GA4 Can Actually Hurt Your SEO It's counterintuitive, but the Google Analytics script can actively harm your search rankings. Here's how. 3.1 Script Weight Google (the search engine) favors fast websites. Core Web Vitals — a set of web performance metrics — have been a confirmed ranking factor since 2021. The GA4 script is not lightweight. The gtag.js tag loads multiple JavaScript libraries for advertising tracking, consent management, and advanced data collection. The total weight can reach 45 KB or more (compressed), plus network requests to collection servers. For comparison, here's the typical script weight of competing analytics tools:Solution Script size (compressed) Ratio vs GA4GA4 (gtag.js) ~45 KB BaselineMatomo ~20 KB 2× lighterSimple Analytics ~6 KB 7× lighterFathom ~2 KB 22× lighterPlausible ~1 KB 45× lighterPirsch <1 KB 50× lighterAn independent audit by Bejamas measured the concrete impact of third-party scripts on web performance and showed that analytics scripts are among the heaviest contributors to main-thread blocking time (Total Blocking Time, one of the Core Web Vitals). → Source: Bejamas – How Popular Scripts Slow Down Your Website 3.2 The Concrete Impact on Core Web Vitals When a heavy script loads, it impacts three key metrics:LCP (Largest Contentful Paint): the time before the main content is visible. A heavy script delays rendering. INP (Interaction to Next Paint): responsiveness to clicks. A script that monopolizes the main thread degrades interactivity. CLS (Cumulative Layout Shift): visual stability. Some late-loading scripts cause layout shifts.Switching from a 45 KB script to a 1 KB script won't single-handedly turn a slow site into a fast one. But on an already-optimized site, it's often the kind of detail that tips a Core Web Vitals score from "needs improvement" to "good" — and every millisecond counts when Google evaluates performance. → Source: Google – Core Web Vitals & Page Experience 3.3 Slower Sites = Fewer Conversions Beyond pure SEO, speed directly impacts conversion rates. Google has published data showing that when mobile page load time goes from 1 to 3 seconds, the probability of bounce increases by 32%. Each additional second makes it worse. → Source: Think with Google – Mobile Speed Benchmarks Bottom line: by switching to a lightweight analytics tool, you improve your load time, which is both a confirmed ranking factor for Google and a conversion driver.4. The Winning Duo for SEO in 2026 To run your search strategy effectively without the bloat, here's the ideal setup: Google Search Console (Essential — and Free) This is your source of truth for SEO. Use it to:Monitor your positions and clicks in search results. Identify which keywords bring traffic (and which ones you're gaining on). Detect indexing errors and technical issues. Track your Core Web Vitals over time.No analytics tool, no matter how powerful, can replace this data: only Google knows which keywords you actually rank for. A Frugal Analytics Tool (For Conversion Tracking) Search Console tells you where the traffic comes from, but it doesn't tell you what happens next. To know whether your SEO visitors convert into customers, you need an on-site measurement tool — but it doesn't need to be complex. The 5 essential KPIs are enough: visitors, sources, top pages, key events, conversions. A frugal analytics tool gives you those answers in seconds, without weighing down your site. What You Gain with This DuoBetter technical SEO: a faster site thanks to a lightweight script. Complete SEO data: rankings (via GSC) + conversions (via frugal analytics). Simpler compliance: no cookie banner if your analytics meets consent exemption criteria. Time saved: two simple interfaces instead of one bloated platform.5. Real-World Examples: Who Dropped GA4 and What Happened? Many websites have migrated from Google Analytics to frugal solutions with zero negative impact on their SEO. Plausible Analytics regularly documents testimonials from companies that made this transition. The pattern is always the same: no ranking loss, often improved load times, and simplified privacy compliance. Basecamp (a well-known SaaS company) publicly adopted Plausible as a replacement for Google Analytics. Hugging Face (a major AI platform) did the same. Neither observed any SEO degradation — quite the opposite. The explanation is logical: Google ranks sites based on content quality, authority (backlinks), technical health (speed, mobile-friendliness), and user experience. None of these criteria depend on the brand of your visitor counter.6. How to Switch: A Practical Migration Path If you're ready to make the move, here's a low-risk approach that minimizes disruption. Week 1: Install the new tool alongside GA4 Choose a frugal analytics tool (see our comparison guide for help choosing). Install its tracking script on your site — it's typically a single line of code. Keep GA4 running simultaneously. This gives you a parallel data period to compare. Weeks 2-4: Compare the data Over 3-4 weeks, compare key metrics between GA4 and the new tool. You'll likely notice the frugal tool reports more visitors — because it doesn't depend on cookie consent. Traffic sources and top pages should align closely. Conversions should match if you've configured events properly. Week 5: Remove GA4 Once you're confident the new tool captures everything you need, remove the GA4 script from your site. You'll immediately see a performance improvement (check your Core Web Vitals in Search Console). Keep your GA4 account open for a few months if you want to reference historical data. What about historical data? Some tools (Plausible in particular) offer GA data import for historical continuity. The imported data is limited to aggregated metrics — no individual user profiles, which is consistent with the privacy-first approach. For most SMBs, this is more than enough to maintain trend visibility.Conclusion: Cut the Cord Without Fear Don't be afraid to remove Google Analytics. Your search rankings depend on your content quality and site speed, not on the brand of your measurement tool. In fact, lightening your site by replacing a heavy script with a frugal one is often the best gift you can give your SEO. You gain in performance, in compliance, and in clarity — while keeping the data that truly matters through the Search Console + frugal analytics duo. The real risk isn't leaving GA4. It's continuing to fly blind because your tool is too complicated to actually use.FAQ: SEO and Google Analytics Does Google penalize sites that don't use Google Analytics? No. Google has confirmed multiple times that using Google Analytics is not a ranking factor. The search engine evaluates content quality, technical health (speed, mobile), authority (inbound links), and user experience — not the brand of the analytics tool installed. Is Search Console enough for SEO? For tracking SEO performance (positions, clicks, impressions, technical errors), yes. Search Console is the essential, irreplaceable tool. To go further (measuring conversions, understanding post-arrival behavior), a complementary analytics tool is useful — but it doesn't need to be GA4. What's the real impact of a heavy analytics script on SEO? GA4's script weighs approximately 45 KB compressed. Frugal solutions weigh between 1 and 6 KB. This difference impacts Core Web Vitals (LCP, INP), which have been a confirmed ranking factor since 2021. On an already-optimized site, switching from a heavy to a lightweight script can be enough to push performance scores from "needs improvement" to "good." Can I use Search Console without Google Analytics? Yes, absolutely. The two tools work independently. You can connect Search Console to some frugal solutions (Plausible and Pirsch offer this integration) to see your SEO data directly in your analytics dashboard. If GA4 doesn't help with SEO, what is it for? GA4 is a user behavior measurement and marketing attribution tool. It's designed for teams that need detailed conversion funnels, behavioral cohorts, and integrations with the Google advertising ecosystem (Google Ads). For pure SEO, it adds nothing that Search Console doesn't already provide — and its weight can actually hurt your rankings.

- 05 Jan, 2026

Stop Sending PDF Reports Your Clients Don't Read

It's the end of the month. For every freelancer or project manager at an agency, it's time for the dreaded ritual: monthly reporting. You export PDFs from Google Analytics, take screenshots, add three comments in an email, and hit send. Time spent: 2 to 4 hours. The reality? Your client opens the PDF, glances at the first chart, has no idea why the "Engagement Rate" dropped by 0.4%, and closes the document. You've lost half a day. And they didn't perceive the value of your work.1. The Problem with "Accounting-Style" Reports Why it doesn't work The classic mistake is trying to prove you've been working by showing lots of numbers. "Look, there are 45 pages of charts, so clearly I've been busy." But your client (often an SMB owner) isn't paying you for charts. They're paying for results and peace of mind. Sending them a complex report is like handing the problem back to them. They don't know what to do with it, and they end up questioning your usefulness. What the research says According to HubSpot's surveys on agency practices, reporting is consistently cited as one of the most time-consuming and least client-valued tasks. The paradox is cruel: the more time you spend on the report, the less it gets read — because its very length discourages reading. Research by Databox among marketing professionals shows that most clients want short, results-oriented reporting, not exhaustive audits. The most satisfied clients are those who receive a summary of 5 to 10 metrics maximum, accompanied by a clear recommendation. → Sources: HubSpot – State of Marketing Report, Databox – Reporting Best Practices2. The Method: Less Data, More Story For a report to be read, understood, and appreciated, it needs to tell a simple story in 4 acts. This is Data Storytelling applied to client reporting. Act 1 — The "One-Liner" (The Weather Report) Start your reporting email with a single sentence that summarizes the month. Your client should understand the trend without opening any attachment.Bad: "Here are this month's statistics." Good: "Record month for traffic (+20%), but slight dip in qualified leads — we know why and we're fixing it."This sentence is the ultimate test. If you can't summarize the month in one line, you haven't found the insight yet. Go back to the numbers. Act 2 — The 3 Macro KPIs (The Dashboard) Don't include everything. Only show the goals defined at the start of the contract, and report on progress. Three metrics are enough in the vast majority of cases. For a brochure site or blog, these are typically the 5 essential KPIs — but in client reporting, condense further: total traffic, number of leads/sales, and the "star metric" of the month (the one that moved the most, for better or worse). Present these numbers with comparison to the previous month and the target. A 3-row table beats a 40-page PDF every time.Metric Target Last Month This Month TrendUnique visitors 5,000 4,200 5,100 ✅ +21%Form submissions 30 28 22 ⚠️ -21%Cost per lead (if Ads) < $15 $12 $18 ❌ +50%Act 3 — "What We Did" → "What It Produced" This is where you sell your work — but by connecting it to results, not listing tasks.Bad: "We published 4 blog posts, optimized 3 pages, and adjusted keywords." Good: "The GDPR article (published on the 15th) generated 450 qualified visits from LinkedIn → 8 form submissions. The 3 optimized pages moved from page 3 to page 1 for their target keywords."The principle is to systematically link the action to the impact. If an action hasn't produced measurable results yet, say so honestly ("impact expected in 4-6 weeks, we're monitoring"). Act 4 — The Plan for Next Month This is the most important part — and the most often forgotten. A report shouldn't just look in the rearview mirror. It should sell what's next."Since LinkedIn traffic is growing (+40% over 2 months), next month we'll double the posting frequency." "The drop in leads is coming from a form that's too long (5 fields instead of 3). We're simplifying it next week."This final act reassures your client about two things: you understand what's happening, and you have a plan. That's what builds loyalty — far more than a 45-page PDF.3. Before/After: Same Month, Two Reports To make the method concrete, here's the same period presented two ways. ❌ The "Accounting" Report (What You Might Be Doing)3-line email ("Here's this month's report, let me know if you have questions"). 38-page PDF attached, exported from GA4. Uncommented charts: bounce rate, session duration, user flows, demographic reports. Production time: 3.5 hours. Probability the client reads it fully: ~5%. Client perception: "I don't understand, but I suppose things are fine."✅ The "Frugal" Report (The 4-Act Method)Structured email of 15 lines maximum (One-Liner + 3 KPIs + Actions/Results + Plan). A 3-5 row table embedded in the email (no attachment). Optional: a link to a shared real-time dashboard (Plausible, Fathom, etc. allow this). Production time: 45 minutes. Probability the client reads it: ~80%. Client perception: "My agency has things under control. They understand my business."The gain is triple: you spend 3x less time, the client understands 10x better, and your perceived value increases. That's a virtuous cycle.4. Your Tool Shapes Your Report There's a reason the "Accounting" report persists: the tool produces it. Google Analytics generates multi-page PDF reports because it has dozens of reports. When your tool is an overengineered machine, your reporting inherits that complexity. The reverse is also true. When your analytics tool shows a dashboard that fits on one screen — visitors, sources, top pages, conversions — your reporting flows naturally from it. You don't export 40 charts: you take a screenshot and comment on it. This is one of the strongest arguments for switching to a frugal analytics tool when managing multiple clients: reporting time drops mechanically because there's nothing to "filter." The tool only shows what matters. Some solutions (Plausible, Fathom) even offer read-only shareable dashboards: you give your client a link, they can check their stats whenever they want, without bothering you. The monthly report then becomes a strategic commentary email, not a data export.5. The Multi-Client Challenge For an agency or freelancer managing 10, 20, or 30 sites, the reporting problem multiplies. Spending 3 hours per client = 30 to 90 hours per month of pure reporting. That's unsustainable — and it's time you're not spending on strategy, execution, or acquiring new clients. The solution comes from three levers:Standardize the format. Use the same 4-act template for all clients. Only the numbers change. Create a reusable email template with placeholders for the One-Liner, the 3 KPIs, the Actions/Results, and the Plan. Once the structure is muscle memory, you'll fill it in 20 minutes per client. Choose a multi-site tool. Frugal solutions typically let you manage all your clients from a single interface, with one dashboard per site. The analytics comparison guide details site limits by plan. Being able to switch between clients in one click (instead of logging into separate GA4 accounts) is a massive time-saver. Automate the sharing, not the analysis. Instead of exporting PDFs, share a link to the real-time dashboard and send a strategic commentary email. The client has 24/7 access to the numbers, and your value-add is in the analysis, not the extraction. This also reduces "where are my stats?" emails between reporting cycles.The compounding effect When you standardize reporting across 15 clients and switch to a frugal tool with shareable dashboards, the math changes dramatically. Instead of 15 × 3 hours = 45 hours/month, you're looking at 15 × 40 minutes = 10 hours/month. That's 35 hours reclaimed — nearly a full work week — that you can reinvest in billable work, business development, or simply not burning out. More importantly, your clients get better reporting. A 10-line email they actually read beats a 40-page PDF they ignore. Your retention improves because clients feel understood, not buried under data they didn't ask for.Conclusion: Sell Intelligence, Not Spreadsheets A good analytics tool shouldn't serve as a PDF generator. It should help you find the insight — the key trend, the opportunity, the red flag — that you'll share with your client in 3 sentences. If your tool is too complicated, you spend your time searching for data. If it's simple, you spend your time analyzing it. That analysis is what justifies your fee — not the number of pages in the PDF. In 2026, an agency's value is no longer in extracting data. It's in translating data into business decisions. And for that, you need to start by measuring only what matters.FAQ: Client Reporting How much time should I spend on monthly reporting per client? With the 4-act method, plan for 30 to 60 minutes per client. If you regularly exceed 2 hours, it's a signal that your analytics tool is too complex or that you haven't standardized your format. The goal is for time spent analyzing to exceed time spent extracting. Should I fully automate reports? No. Full automation (scheduled exports, auto-sent emails) produces noise. Your client receives a PDF without context and doesn't know what to do with it. The ideal is a hybrid: data is accessible automatically (shared dashboard), and your value-add is the monthly strategic commentary — which you write yourself. Which KPIs should I show an e-commerce client? For e-commerce, the 3 macro KPIs are: revenue generated by the site, conversion rate (visitors → buyers), and customer acquisition cost (if running ads). Everything else is operational detail you manage internally. Your client wants to know how much the site earned, not how many people clicked "Add to Cart." My client insists on a detailed GA4 report. What should I do? Educate them gradually. Send the frugal 4-act format alongside the PDF they're asking for. After 2-3 months, ask which one they actually read. In 90% of cases, they'll admit they only read your email — and you can then drop the PDF.